-

Posts

13,456 -

Joined

-

Last visited

-

Days Won

39

Content Type

Profiles

Forums

Events

Posts posted by The Monkey

-

-

This forum is completely fucked up...and I like it! But I still don't get it...I'm sure I will eventually.

Nick, here's what Jude posted on the Head-Fi Facebook Group page:

Here's a status update (which I will also post on the error screen for those who visit http://www.head-fi.org):This is obviously our worst outage in the history of Head-Fi.org. What happened was that we had Head-Fi.org's files and backups moved to a multi-terabyte network attached storage (NAS) unit while we continued to work on the proper implementation of a true clustering configuration for Head-Fi.org. From what we can tell, this particular NAS unit--with a reputation for being ultra-reliable--had one of its 12-channel RAID controllers malfunction.

This particular NAS unit is a 24-drive unit, made up of two 12-drive arrays, each array with two parity drives (RAID 6). Maybe we put too much faith in it, but we thought were safe housing everything on it for the time being (the last several months). From what we're being told, when the controller card malfunctioned, it messed up the NAS unit's logical volume, which is where we're at now. We are working closely with the vendor and the technical support team in Europe to restore the logical volume and get the NAS back up again. We feel reasonably confident we will be able to restore Head-Fi to its state just before its outage, but won't know for sure if we'll have to fall back to a back-up, of which there are several on the NAS. Unfortunately, the only off-NAS backups we have of Head-Fi.org's databases are quite old, meaning we'd lose thousands of posts, so I will not put Head-Fi.org back up until we know for sure the status of the logical volume restoration.

All I can do is apologize for this very extended outage, and for not having more recent off-NAS backups. Again, we hope to have the site back up and running fully tomorrow evening, but I will keep you up to date if that changes.

The repair was well under way today when the repair process ran out of RAM (the NAS had four gigabytes of RAM). Since, for a number of reasons, the repair process was being run almost entirely from RAM, the four gigs was apparently not enough). I have ordered 16 gigabytes of RAM, which will arrive tomorrow before noon EST, whereupon the team in Europe can commence with the remotely administered repair process(es). The process has gone slower than we anticipated, and running out of RAM today was an unfortunate setback. But, once again, 16 gigabytes of RAM (in the form of eight DDR2, ECC registered 2GB cards--versus the four 1-gigabyte cards in there now) should be arriving in the morning. whereupon we call our friends in Europe and they finish the repair work. We already know some data was lost, but hope and pray that what we do retrieve will be enough to let us get the site back up tomorrow evening.

We know we should have been more diligent about keeping more backups off the NAS, but running two 12-drive arrays, each array in RAID 6 (two parity drives, for a total of four)--and the fact that our previous NAS units ran without problems for seven years--we felt we were safe in keeping them there until we were finally through with the proper clustering we've intended for months. All I can do is apologize for this very extended outage, and for not having more recent off-NAS backups. Again, we hope to have the site back up and running fully tomorrow evening, but I will keep you up to date if that changes.

-

Monkey I don't think nicknutson can access that

lol, the first rule of head-case....

And I've only had 2 beers.

And I've only had 2 beers.Sorry, Nick, what Nate said.

-

It has a long way to go. EDITED for unhelpful link.

-

There was a CD3000 paired with the Woo 5 for part of the day, correct?

-

Fart loudly and often. People will learn to steer clear.

-

I'm a Jets fan. Let's just say that I am encouraging my son to hate them.

-

But really, my words fail at the hilarity of the image.

good christ, that image is hilarious and disturbing on many levels.

-

Some pics. Still figuring out the damn white balance.

My setup of the HR Balanced Desktop, HR Millet(t) Hybrid, and Desktop PS. I also had my balanced HD 650 and HF-1 there.

Singlepower's Transparency Preamp.

Ian's excellent sounding HF-1.

Nate's balanced setup.

Ari's Toaster. This amp looks so much better in person.

RSA's power supply goes topless with audiophile-grade alligator clips.

HE-90 coming out of this. I've learned not to start the day listening here.

Woo amps.

Not sure whose iPod docks these were.

RSA's Apache.

RSA's Predator.

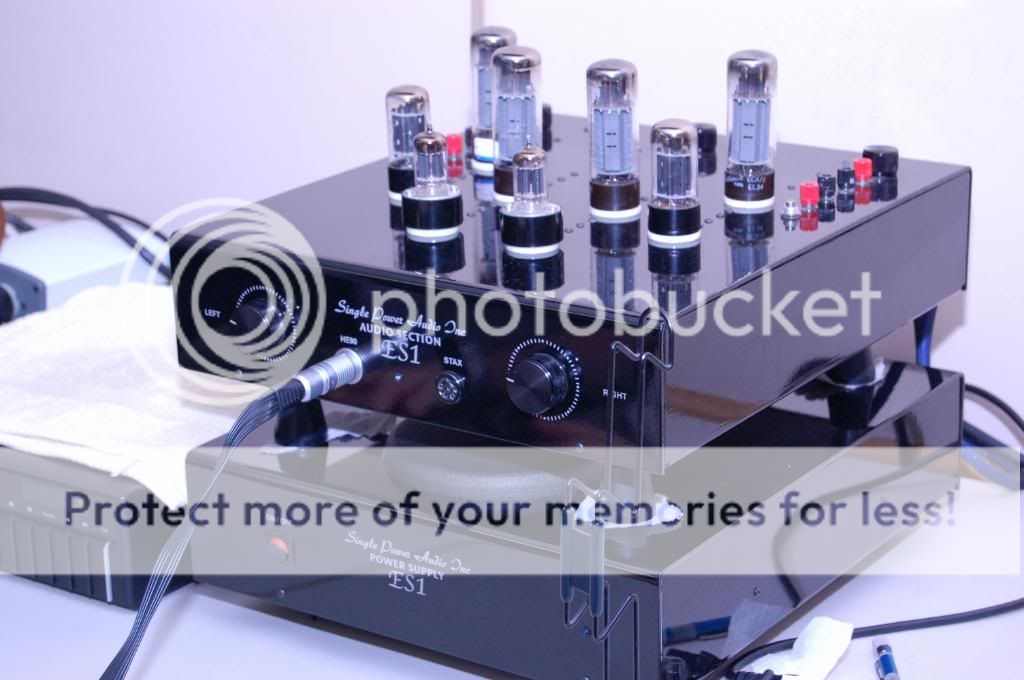

Singlepower Extreme.

-

HF-1

in Headphones

I heard Ian's Zebra HF-1 today at the NYC(ish) Meet, and I really enjoyed it. I prefer it to my newly balanced, but otherwise stock HF-1, but I need more time with those before a final verdict. I like that Ian's mods tame most of the sibilance that continues to bother me with mine.

-

Nice to see everyone today. USG, thanks very much for the name tags; they turned out great.

Big Fat Dots?

in Speakers

Posted

Has anyone used Herbie's Big Fat Dots with their loudspeakers? Any impressions?